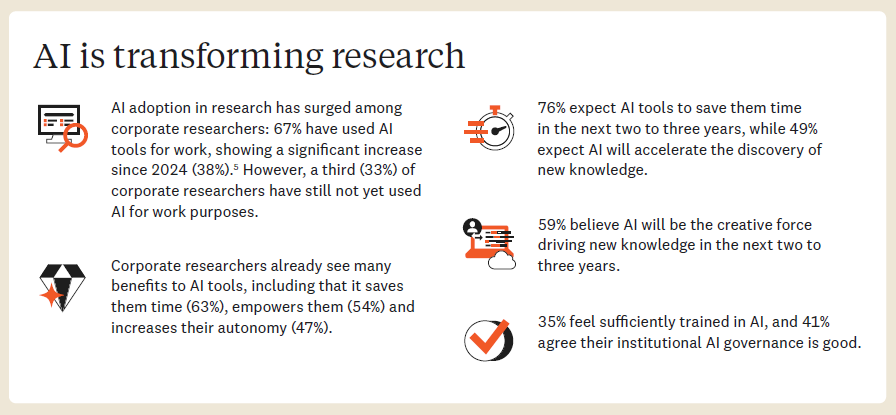

Elsevier’s latest global study, Researcher of the Future, reveals that one-third (33%) of corporate researchers have not yet integrated artificial intelligence (AI) into their work. While most current users report clear productivity gains, the findings highlight a growing divide in AI adoption across the research community and a missed opportunity for corporate R&D teams to maximise value.

The AI Opportunity in Corporate Research

The survey of academic and corporate researchers provides new insights into how the global research community perceives the role of AI in shaping the future of discovery. Among corporate researchers already using AI, the benefits are striking:

- 63% say AI tools save them time.

- 54% believe AI empowers them in their roles.

- 47% report that AI gives them greater autonomy.

Looking ahead, 76% of respondents expect AI to save them time over the next two to three years. Almost half (49%) believe AI will help drive new knowledge, and 44% expect it to improve the overall quality of their research.

Why Adoption Stalls

The report identifies several barriers to broader adoption of AI across corporate research environments:

- Skills and governance: Only 35% of corporate researchers have received adequate AI training, and just 41% believe their organisation has strong AI governance in place.

- Trust and transparency: While 46% agree AI provides useful answers, 29% find them unhelpful, and only 27% say they trust AI-generated responses.

- Limited high-value use: Almost half of respondents would not use AI to draft papers, generate hypotheses, or design experiments — suggesting a lack of confidence in using general-purpose AI tools for specialised scientific work.

Key findings from Elsevier’s Researcher of the Future report illustrate rising AI adoption in corporate R&D.

Bridging the Confidence Gap

To build greater trust, researchers emphasised the need for transparency, traceability, and tools designed specifically for scientific contexts. Key features that would encourage wider use of AI in research include:

- 70% — automatic citations and transparent sourcing;

- 64% — explicit factual accuracy and safety validation;

- 63% — secure handling of confidential research data.

Collectively, these findings point to the need for research-specific, verifiable AI solutions built on trusted scientific content.

Driving Responsible AI for Research Integrity

Stuart Whayman, President of Corporate Markets at Elsevier, said: “AI has enormous potential to accelerate discovery, but general-purpose tools were never built for the precision and traceability that scientific research requires. As this study shows, researchers need transparent AI that cites trusted sources and explains its reasoning. Above all, it must meet the same standards of evidence and reproducibility as their own work.”

The full report, Researcher of the Future, is available from Elsevier’s website.